What Is Agentic AI? The Complete Technical Explainer

- Generative AI produces content; agentic AI produces outcomes — the difference is a planning loop that runs continuously, not once

- The four core components of every agentic system: the LLM reasoning engine, tools/APIs, memory (short- and long-term), and the planning loop that ties them together

- Gartner predicts agentic AI will autonomously resolve 80% of common customer service issues by 2029 — without human intervention

- Multi-agent systems multiply debugging surface area — start with a single agent that does one thing well before adding orchestration complexity

- Agents that can take actions in production systems need strict permissions models: an agent that can send emails, modify databases, and deploy code can cascade a single bad decision into real damage

- Model Context Protocol (MCP) is emerging as the open standard for connecting AI agents to external tools — the same integration works across any MCP-compatible platform

Agentic AI is artificial intelligence that can independently plan, make decisions, and take actions to achieve a goal — without a human specifying every step. It's not a chatbot that responds to prompts. It's a system that perceives its environment, reasons about what to do next, executes actions using external tools, and learns from the results through feedback loops.

That's the one-paragraph definition. Now let's go deep.

How agentic AI is different from traditional AI and generative AI

There are three distinct waves of AI, and they're often confused:

Traditional AI uses rule-based algorithms and machine learning models trained on specific datasets for specific tasks. Think spam filters, recommendation engines, and forecasting models. These systems are powerful for problem-solving within narrow domains, but they can't reason about novel situations. A fraud detection model that's excellent at flagging suspicious transactions can't also write you an email about it.

Generative AI — the ChatGPT wave — uses large language models (LLMs) to produce outputs (text, code, images) based on prompts. You ask a question, you get an answer. It's reactive: you provide input, it produces output, conversation over. Tools like ChatGPT from OpenAI and Claude from Anthropic fall into this category when used in their default chat mode.

Agentic AI takes generative AI and wraps it in a loop. Instead of generating a single output and stopping, agentic ai systems use the LLM as a reasoning engine that plans multi-step actions, calls APIs and external tools, evaluates results, and adjusts its approach in real-time. The LLM doesn't just answer — it acts.

Here's the key distinction: generative AI produces content. Agentic AI produces outcomes.

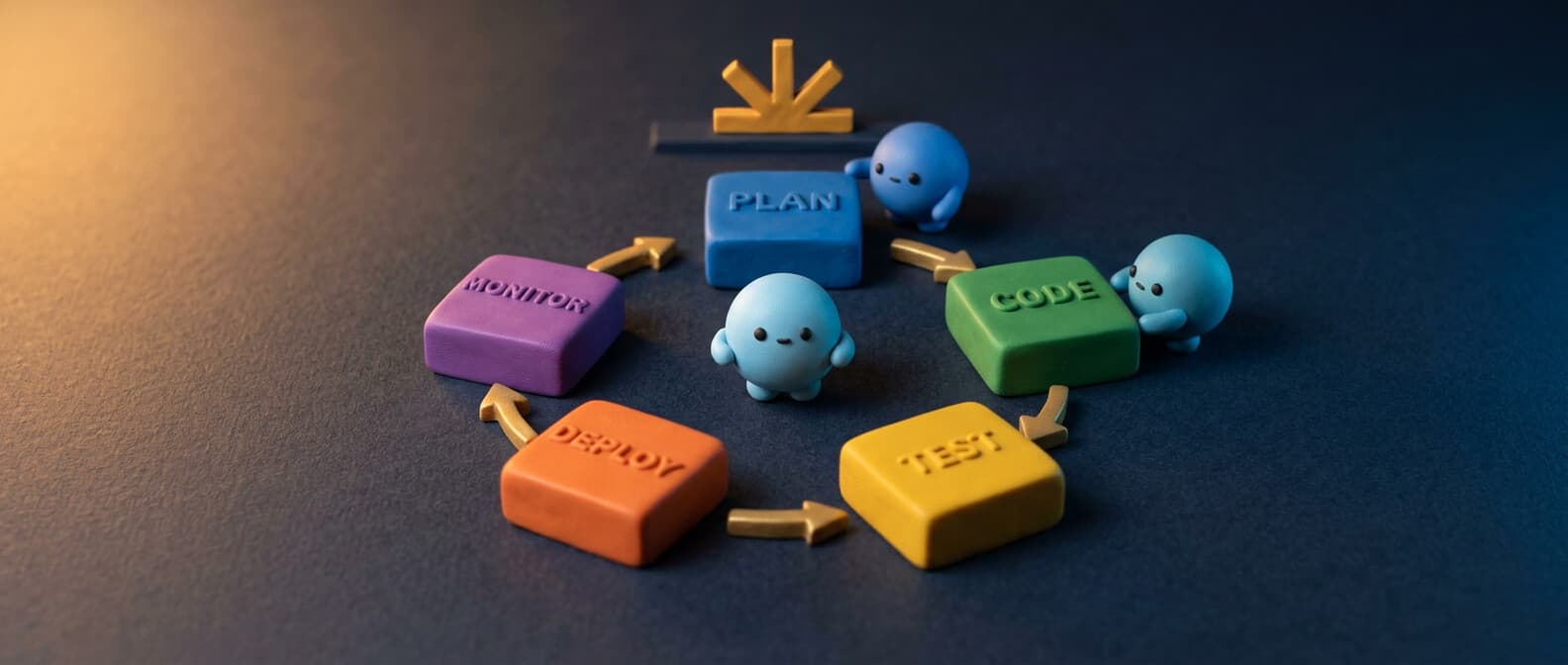

AWS describes this architecture as a four-stage cycle: perceive (collect real-time data), reason (plan using the LLM), act (execute tasks via tools), and learn (refine through reinforcement learning). That cycle runs continuously, not once.

The technical architecture of agentic AI

Every agentic AI system has four core components. Understanding them is the difference between building something real and wrapping a ChatGPT API call in a while loop.

1. The LLM (reasoning engine)

Large language models like GPT-4, Claude, and Gemini provide the reasoning layer. They interpret context, develop action plans, and adapt based on new information. But the LLM alone isn't an agent. It's the brain — you still need a body.

The LLM handles semantic reasoning, natural language processing, and orchestration of what to do next. Modern ai models also use chain-of-thought prompting to break complex problems into smaller steps, which is critical for goal-oriented behavior.

2. Tools and APIs

This is what makes agentic AI actually useful. Agents connect to external tools through APIs — databases, web services, code execution environments, file systems, and third-party apps. Without tools, an LLM can only generate text. With tools, it can query a database, send an email, update a CRM, deploy code, or analyze real-time data.

The Model Context Protocol (MCP) is emerging as the open standard for connecting AI agents to data sources and tools. It lets any ai platform speak the same language when integrating with external services.

3. Memory (short-term and long-term)

Traditional AI chatbots forget everything between conversations. Agentic ai systems maintain state. Short-term memory holds the current task context — what's been done, what's left. Long-term memory stores learned patterns, user preferences, and historical outcomes across sessions.

This is implemented through vector databases, retrieval-augmented generation (RAG), and conversation history persistence. RAG lets agents search through large datasets and knowledge bases to ground their outputs in real data rather than hallucinating.

4. The planning loop

The planning loop is the orchestration layer that ties everything together. It's what turns an LLM with tools into an actual agent. The loop looks like this:

1. Receive goal or trigger

2. Plan: break goal into subtasks

3. Execute: call tools/APIs for current subtask

4. Evaluate: did it work? Check outputs against success criteria

5. Adjust: if not, replan. If yes, move to next subtask

6. Repeat until goal is achieved or failure threshold hit

This is fundamentally different from a simple workflow. Workflows are deterministic — step A always leads to step B. Agent loops are adaptive. If step B fails, the agent reasons about why and tries a different approach. That's the decision-making capability that separates agentic AI from workflow automation.

Real-world use cases across industries

Agentic AI isn't theoretical. Here's where agentic ai work is happening right now:

Software development

AI agents that write code, run tests, fix bugs, and create pull requests — autonomously. GitHub Copilot's coding agent assigns issues directly to an AI that writes code and responds to review feedback. Claude Code runs in your terminal, understands your codebase, and handles git workflows through natural language commands. These are real agentic AI applications, not autocomplete.

According to GitHub's research, developers using Copilot completed tasks 55% faster and reported 73% better flow state. And that's just code completion — agentic coding tools go further by handling the full debugging and deployment cycle.

Customer support

Agentic AI handles customer support by understanding intent, accessing the customer's account data, resolving issues, and escalating only when confidence is low. This isn't the phone tree chatbots of 2020. Modern support agents use RAG to pull from knowledge bases, access real-time data from CRM systems, and handle multi-turn conversations that span billing, returns, and technical troubleshooting.

Gartner predicts that by 2029, agentic AI will resolve 80% of common service issues without human intervention.

Healthcare

In healthcare, agentic AI systems analyze patient data, cross-reference medical literature, and suggest treatment plans — with mandatory human oversight at every step. The frameworks here require explicit guardrails and validation because the stakes are life-or-death. Agents in healthcare don't make final decisions; they prepare comprehensive recommendations for clinicians and handle repetitive tasks like documentation and scheduling.

Supply chain and operations

Supply chain management is a natural fit for agentic AI because it involves complex workflows with constantly changing variables. Agents monitor inventory in real-time, forecast demand using machine learning models, reorder supplies through APIs, and adapt routes based on weather or logistics data. The goal-oriented nature of supply chain optimization — minimize cost, maximize delivery speed — maps perfectly to how agentic AI reasons through problems.

Cybersecurity

In cybersecurity, autonomous agents monitor network traffic, detect anomalies, and respond to threats in real-time without waiting for a human analyst. They can isolate compromised systems, block suspicious IPs, and generate incident reports. The benefit: response times measured in seconds instead of hours. The risk: false positives that lock out legitimate users, which is why human intervention capabilities are critical.

Building your first agent?

We cover agent frameworks, orchestration patterns, and the security research behind agentic deployments every week — plus practical guides for the tools that make agents actually useful.

Completely free, unsubscribe anytime.

The benefits of agentic AI (and the honest limits)

The benefits of agentic AI are real:

- Scale without headcount. AI-powered agents handle repetitive tasks 24/7 — data processing, monitoring, routine decision-making — freeing humans for complex problems that require creativity and judgment.

- Faster execution. An agent that can reason, plan, and act in seconds accomplishes what might take a human team hours or days to coordinate across multiple systems and apps.

- Adaptive problem-solving. Unlike traditional AI that breaks on edge cases, agentic systems adjust their approach based on feedback loops and real-time data.

- End-to-end automation. Instead of automating individual steps, agentic AI can streamline entire business processes from trigger to completion — including robotic process automation (RPA) tasks that previously required brittle scripts.

But the limits are just as real:

- Hallucination risk. LLMs can confidently generate wrong outputs. Without validation layers and guardrails, agents will act on bad information.

- Debugging is hard. When a multi-agent system makes a mistake three steps deep, tracing the failure requires robust logging and observability tools. Troubleshooting agentic systems is significantly harder than troubleshooting deterministic workflows.

- Permissions and safety. Agents that can take actions in production systems need strict permissions models. If an agent can send emails, modify databases, and deploy code, a single bad decision can cascade. Human oversight isn't optional — it's architecturally required.

Guardrails: how to build safe agentic AI

Every serious agentic AI deployment needs guardrails. Here's what that looks like in practice:

Human-in-the-loop checkpoints. For high-stakes actions (financial transactions, customer communications, production deployments), require explicit human approval before the agent proceeds. LangGraph has built-in support for this with its statefulness model — agents pause, present their plan, and wait for approval.

Action boundaries. Define what the agent can't do. This is more important than defining what it can. Set explicit permissions: this agent can read but not write to the database. This agent can draft emails but not send them. OpenAI's function calling system and similar frameworks in open source projects let you limit available tools per agent.

Output validation. Every agent output should be checked against business rules, format requirements, and confidence thresholds. If an agent's confidence is below a threshold on a medical diagnosis or financial recommendation, it escalates to a human — no exceptions.

Monitoring and metrics. Track success rates, latency, confidence scores, error rates, and cost per task. You need dashboards and alerting, not just logs. These metrics tell you when an agent is degrading before it causes real damage.

Feedback loops for improvement. Use reinforcement learning from human feedback (RLHF) and direct outcome tracking to improve agent performance over time. The learning stage — where agents refine their algorithms based on success/failure signals — is what makes agentic AI systems get better instead of just maintaining the same level of mediocrity.

Multi-agent systems: when one agent isn't enough

Real-world problems often exceed what a single agent can handle. Multi-agent systems use multiple specialized autonomous agents that collaborate on complex tasks. One agent handles research, another handles writing, a third handles validation — and a coordinator agent manages the workflow.

Frameworks like CrewAI and LangGraph provide the orchestration layer for multi-agent systems. The agents share context through communal memory layers, distribute workloads, and can even specialize in different ai capabilities — one might be optimized for code generation while another excels at data analysis.

The challenge with multi-agent systems is complexity. Each additional agent multiplies the debugging surface. Communication between agents can introduce latency and errors. Start with a single agent that does one thing well, then expand to multi-agent only when you've proven the need.

Building your first agentic AI system

If you're ready to move from understanding to building, here's the practical path:

-

Pick a specific task. Not "automate sales." Instead: "when a new lead fills out the contact form, research their company, score the lead, and draft a personalized follow-up email for sales to review."

-

Choose your framework. For full-code agent development, LangGraph (Python, open source) gives you the most control. For faster prototyping, CrewAI provides higher-level abstractions. For personal agent workflows, OpenClaw runs on your own machine with real tool access. See our AI agent framework comparison for the full breakdown.

-

Define your guardrails early. Before writing the agent logic, define what it can and can't do. What data sources can it access? What actions require human approval? What happens when it fails?

-

Start with one LLM, one tool. Don't build a multi-agent system on day one. Build a single agent that calls one API reliably. Then add complexity.

-

Measure everything. Track cost, latency, success rate, and user satisfaction from the first run. These metrics drive every optimization decision.

The future: where agentic AI is going

The trajectory is clear: from ai tools you prompt to ai agents you delegate to. The 2024 Stack Overflow survey found that 76% of developers are using or planning to use AI tools in their development process. But only a fraction are using truly agentic systems.

The gap between "AI that answers questions" and "AI that completes initiatives" is closing fast. Every major ai platform — OpenAI, Anthropic, Google, Microsoft — is investing heavily in agent capabilities. The ecosystem of open source frameworks and scalable deployment tools is maturing.

The question isn't whether agentic AI will transform business processes and software development. It's whether your organization builds the competence now or scrambles to catch up later. Start with a real use case, pick a framework, set your guardrails, and ship something. The technology is ready. The bottleneck is your business goals being specific enough for an agent to execute.